There has been a dramatic upgrade this year to the technology in the Applied Visualization Laboratory at the Center for Advanced Energy Studies (CAES), but just as a Formula One race car won’t do much without an experienced driver and pit crew, it’s the people who make things hum.

In AVL’s case, Dr. James Money and five interns have spent their summer pushing the envelope, devising new tools for turning massive data sets into visualizations that can be viewed in the new CAVE Automated Virtual Environment (CAVE), as well as on desktop and laptop computers, tablets and phones, and virtual reality headsets.

AVL’s mission is to provide researchers from universities, industry and others with a user facility where they can visualize and address scientific and technical challenges.

The CAVE is where researchers — wearing stereoscopic glasses to create depth perception and using a wand to manipulate and control data — can study such things as contaminant flows through water systems, cross sections of graphite billets from a nuclear reactor, or electric vehicle driving and charging patterns. In addition to a new, larger, digitally powered CAVE, AVL has completed the installation of the wireless Phasespace Tracking system that will allow researchers to use up to seven Samsung Gear VR applications.

Money’s interns this summer included Luke Kingsley (University of Utah), Rebecca L. Wild (James Madison University), Rajiv Khadka (University of Wyoming), Marko Sterbentz (University of Southern California) and Nathan Morrical (Idaho State University). To sum up their very complicated work in simple terms, the two best words might be “volume” and “speed.” For immersive visualizations to work seamlessly, terabyte- and exabyte-sized data sets have to be loaded and processed in real time.

Morrical, who is pursuing a bachelor’s in computer science, was recognized at the Idaho National Laboratory Intern Expo in August for his poster presentation, “Optimizing Volumes for Visualization.”

Data sets are often too large to fit in a graphics card’s memory. To address this, Morrical has delved into the possibilities of “hierarchical Z (HZ)” ordering, which translates data from a Cartesian coordinate plane to a one-dimensional array ordered by locality at different resolution levels. Using experimentally collected image stacks such as X-ray and CT scans, these volumes were converted to the HZ ordering for efficient access time.

To speed up the computational time from days and weeks to minutes, he used Open Computing Language (OpenCL), a framework for parallel processing using graphical processing units (GPUs) and other accelerator platforms. These GPU calculations enabled the development of an HZ storage format for the rendering in Sterbentz’s project, “Adaptive Volume Rendering for Exascale Data Using Immersive Environments.”

As most rendering systems are limited by the size of their video memory, Sterbentz focused on software based on the “ray marching algorithm” using the HZ format. For each pixel cast on a screen by a camera, a ray is cast and then checked to see if it intersects with the data volume. If an intersection is found, samples of the data’s intensity values are taken at equidistant points along the ray, and then blended to produce a final pixel color.

Using the HZ-order data format, the raw data could be reordered, allowing for a smaller but still representative portion of the data to be used for rendering.

“(The) sections of the data that are closer to the user’s view are rendered at higher levels of detail than sections that are further away,” Sterbentz said. “The result is a high-fidelity representation of that data that only requires a small fraction of the data set.”

The result is a completely interactive, multiresolution viewing across a range of devices from desktops, virtual reality headsets and the CAVE.

The Unity 3D game engine enabled the AVL team to build software for systems ranging from the CAVE to desktop computers to VR headsets to mobile phones. The result is the first exascale platform that will be released as open-source software later this fall for volume visualization.

Back for his third summer as a CAES intern, Khadka focused on developing a collaborative virtual environment (CVE) across disparate virtual reality systems, enabling collaborators to work together whether in 2-D or 3-D environments.

“It’s not bound to any kind of hardware system,” he said. “The main issue was the networking, properly mapping all that data from the CAVE to the desktop and from the desktop to the CAVE.”

When one collaborator can view data in the CAVE while another is viewing it on a laptop, tablet, headset or phone, it’s only a small step to where this can happen across wide geographical areas, he said. While seeking to understand the architecture, Khadka relied on a third-party server that offered modularity, which allowed the CAES researchers to pursue collaborative analytics across these environments. Khadka was also involved in a three-week study with researchers from all parts of INL (Materials & Fuels Complex, GIS, High Performance Computing) aimed at cataloging their data visualization needs.

Kingsley noticed that while AVL has transitioned into using University of Wisconsin’s open-source UniCAVE library for large format displays — providing a set of packages for dropping in standard Unity 3D application — UniCAVE lacked a generic tooling interface for user interactions. His goal was to create a generic library in Unity and a customizable tool interface to make the library compatible with all different stations in the lab.

He discovered one way of interacting with objects in a virtual environment was a Raycast, which can project an invisible line out of the wand or WiiMote into the scene, returning references to the objects it has intersected with and allowing the user to manipulate multiple data sets. This work produced integral components of the final work for the other interns, as these tools have now replaced commercial software previously utilized in the CAVE and IQ-Stations.

The next step in virtual reality interactions will be a tracking system that lets the users interact using only their hands. Kingsley plans to build on his experience from this summer and investigate Leap Motion’s hand-based tracking system.

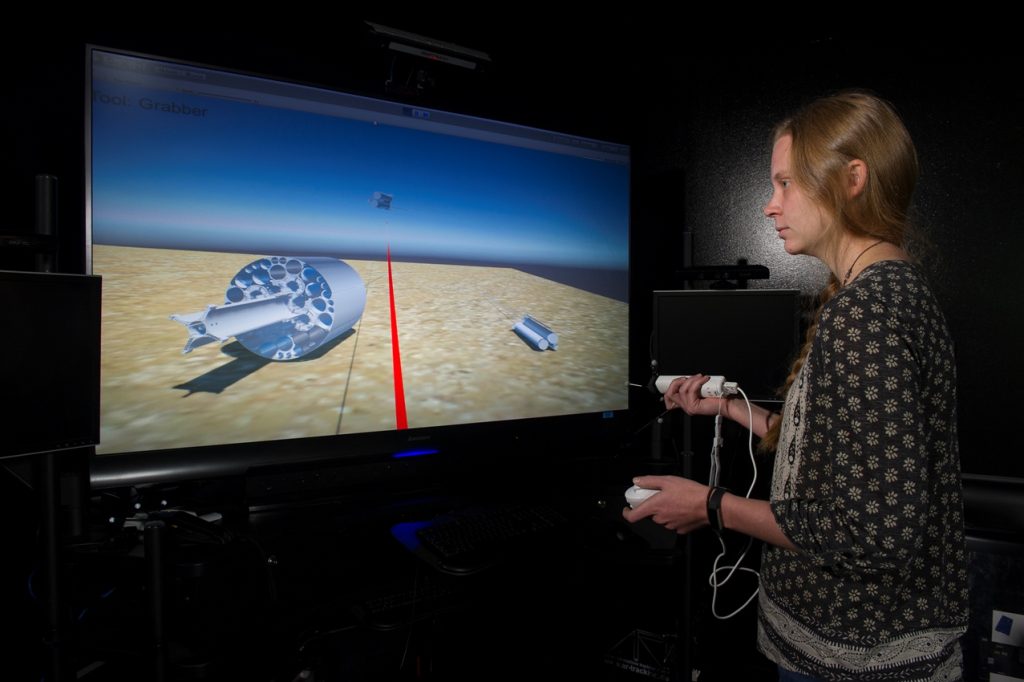

Closer to home, Wild chose to focus on bringing AVL’s resources to bear on INL’s Advanced Test Reactor, creating a VR environment that simulates common maintenance tasks and providing real-time visualization of the heat distribution in the reactor vessel.

“We wanted to examine the ability of VR technology and compare it to what is currently provided by full-scale mock-ups,” she said. “To do this, we created a VR environment and gave the user functionality to move, rotate and lock together components.”

To accomplish this, she used a computer-aided design model of ATR, which she partitioned into 200 main components. Scripts were coded so a user could move around and rotate components with a wand. The ability to lock components together and assemble the ATR from component pieces was developed in a VR environment. A version of this program was developed for the Samsung Gear VR to permit viewing at conferences and other venues. The algorithm for heat distribution was drafted in MATLAB, written in C++, then integrated and parallelized with MPI. The future work for this project includes an in-situ connection for modeling changes in heat with the ATR construction model.

Four of the five AVL interns were finished after the Aug. 10 intern expo. The last left in late August. Money said their work makes a big difference. “They help us build capability in a very cost-effective way,” he said.

Work by Morrical and Sterbentz included algorithms no one had seen before, he said. “(They) really got into it. It was pretty leading edge.”